How to Lead When You Can't Predict What's Coming: Think in Scenarios

Something I've been noticing in a lot of my coaching conversations lately: Decisions feel harder right now.

Not because product leaders suddenly got worse at making them. If anything, making decisions with incomplete information has always been one of the core skills of this job. You rarely have all the data. You're used to operating in ambiguity. That's not new.

But the kind of uncertainty we're dealing with right now feels different. It's not just "we don't have enough user research" or "the market data is inconclusive." It's geopolitical instability. It's predicted inflation that might—or might not—reshape budgets. It's AI changing the rules of what's possible faster than most organizations can absorb. It's all of this happening at the same time.

And here's the tension: Organizations still have money to spend. They still need to innovate. Standing still isn't a strategy. But spending boldly when the ground keeps shifting? That doesn't feel great, either.

So what do you do?

You do what you've always done: You lead. You make decisions. Because that's still the job. In fact, I'd argue it's one of the most important things you do as a product leader. In the PLwheel, I describe it as providing directional clarity—giving your team, your product organization, and the broader company a sense of where you're headed and why.

But here's the honest question: How do you provide directional clarity when you're genuinely not sure what's coming next?

I think the answer is to stop trying to predict the future—and start thinking in scenarios.

Here’s what I mean: Instead of locking onto one version of what the future holds, you explore several. You don’t necessarily need to be right about any single one, but you do need to be prepared for a range of possibilities. And—this is the part I find really powerful—to use those different futures as lenses for your current work.

Here's what that might look like in practice.

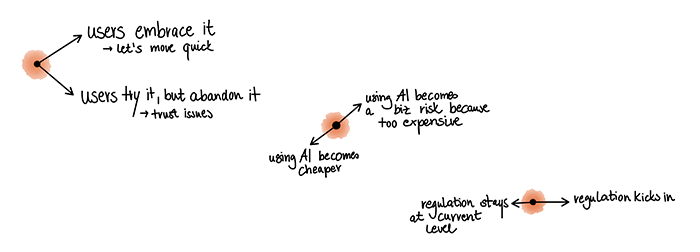

Let's say your team is debating whether to build agentic workflows into your product: AI that doesn't just suggest next steps, but actually executes multi-step tasks on behalf of the user.

Instead of jumping to the conclusion that this is the only right thing to do right now—because everyone seems convinced that customers will leave if you don't paint AI all over your product—you could pause and draw out a few different scenarios first.

What if users embrace it and start expecting every product to handle routine tasks autonomously, meaning you're late if you don't ship it now?

What if users try it, feel uncomfortable not being in control, and abandon the feature after a week?

What if new regulation requires explicit user consent for every single automated action, making the whole workflow clunky and slow?

What if your customers don't actually want an AI agent at all—what they really want is smarter reports and dashboards that surface the right information without them having to dig for it?

None of these is THE future. But exploring them helps you figure out where the truth is most likely lying—before you commit.

Another example: Think about your product strategy for the next 12 months. You probably have a bet in there somewhere, a direction you're leaning toward. Now push it to an extreme. What if that bet is wildly successful? What would you need to be ready for? What if it completely fails? What's your fallback? These aren't comfortable questions. But they're the kind of questions that help you build strategies that hold up under pressure, not just under ideal conditions.

"My experience will be everyone's experience"—and other logical traps

There's a logical trap I see a lot in the predictions that are floating around right now—in articles, on LinkedIn, at conferences. It goes something like this: Someone who's living on the absolute cutting edge of a new technology shares their experience, and the implicit message is "this is everyone's future." But that's a fallacy.

Early adopters are not representative.

What works for someone who's fully AI-native and comfortable working out of a terminal all day is not necessarily what will work for your organization, your team, or your customers. Their experience is one data point. One scenario. Not the truth.

I think what's more useful is to read those stories—and I do think you should still read them—but to treat each one as one possible future among many.

Ask yourself: What's the underlying insight here? Not the specific prediction, but the pattern underneath it. That's where the real value is.

Teresa Torres and I talk about this in a forthcoming episode of our podcast (Predicting the Future, airing April 21, 2026), and she put it in a way that stuck with me: When you push scenarios to the extreme, they don't just prepare you for multiple outcomes. They actually help you build better products right now. Because they force you to explore more of the problem space and the solution space than you would if you just locked onto one prediction.

So here's what I'd encourage you to try: Next time you read a bold prediction about AI, the economy, or the future of product management, pause and say, “Okay, that's one possible future. What are two or three other ways this could play out? And what would each of them mean for the work me and my team are doing today?”

You don't need to have the answer. Nobody does right now. But being the person who says "I don't know exactly where this is headed, but here's how I'm thinking about it" is not a weakness. That's directional clarity. And it's one of the strongest things you can do as a leader.

This post was first published in the spring edition of my quarterly newsletter. If you want insights like this in your inbox first, subscribe here.